… And why they’re all misleading and/or faulty.

Article by Ben Griffis

Metrics that are widely used to analyze defensive performances in football are numerous. They all attempt to hone in on specific elements of defensive play: tackles, interceptions, clearances, aerials, etc.

All of these metrics are inherently flawed and shouldn’t be used to evaluate defenders, and especially shouldn’t be used to make decisions with. Widely-used and available event-based defensive metrics simply cannot capture what most of defending is: stopping the opposing player/team.

Why are they wrong?

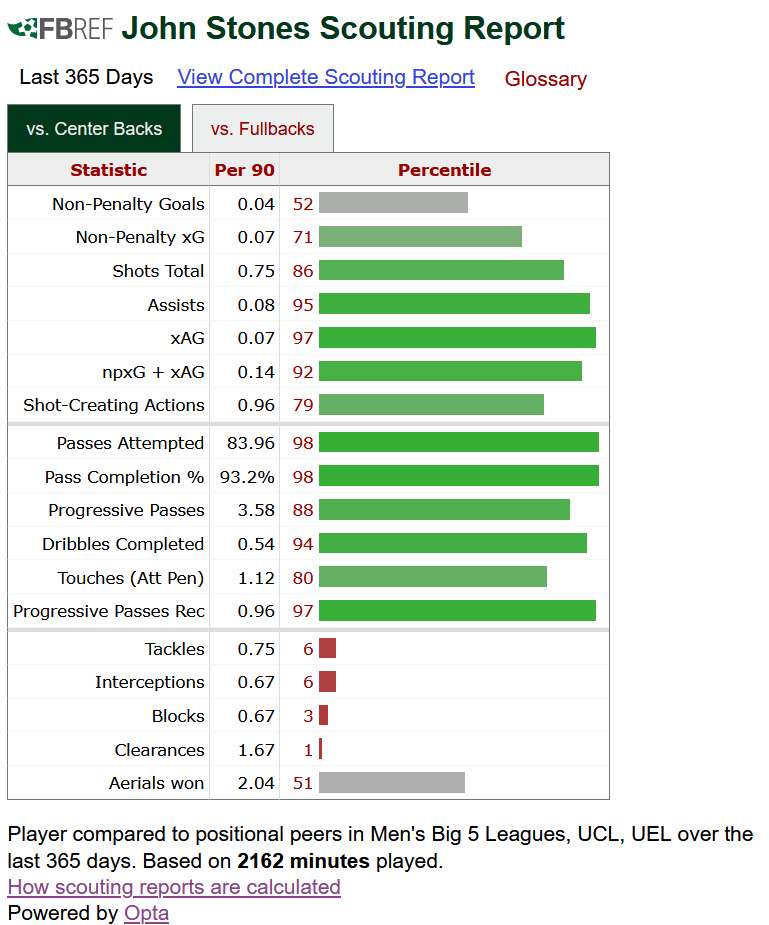

There won’t be tons of data in this essay, but I’d like to set the stage with data. Below is a snapshot of John Stones’ scouting report from FBRef.com. The metrics at the bottom are his defensive metrics.

John Stones, by looking at this, appears to be one of the worst center backs in the league at defending. Now, most people will know that this data is not adjusted for possession, and that Manchester City have insane levels of possession. Possession adjusting data (pAdj) does help to offer a better picture of player data, as we can adjust defensive metrics up in high-possession teams, and down in low-possession teams.

The theory behind pAdj metrics is that a player in a team like Manchester City will have much less opportunity to defend than a team like Bournemouth, for example. On the flip side of that, Bournemouth players’ defensive metrics would be adjusted down, as they have more opportunity to increase those numbers than City.

However, even possession adjusted defensive metrics are still wildly inappropriate to use when evaluating defenders’ defensive skills.

John Stones, even if the FBRef data above was adjusted for possession, would still come out looking like a poor defender. But I’m fairly certain almost any team in any league would love to slot him into their starting XI. But this article isn’t specifically about John Stones or his defensive ability. I’m using him and his FBRef report as examples.

The problem with widely-used and available defensive metrics is that much of the time, the best defensive action at a given time is not to make a tackle or block a shot, for instance. Metrics used by the masses simply don’t capture when a defender’s positioning forces the opponent to pass back to their defensive midfielder. They don’t capture when a defender successfully catches an opposing striker offside. Nor do they capture when a defender is able to force the striker to take a shot with their weaker foot, or even take a horrible near-post shot because they closed down both the passing lanes to their supporting teammate and angle to the far post. Most metrics don’t even capture when defenders use their strength to shepherd a ball out for a goal kick (although StatsBomb does include something basic along these lines, but is not widely available for the masses outside of historic open data).

Interceptions can be flawed by playing against teams who are really bad at passing, or who might pump the ball long most the time. Shot blocks can be flawed because some teams like Liverpool instruct defenders to not block shots, as Alison is great at saving long shots and having a player try to block a shot could mean he sees the shot late, or a wicked deflection re-directs the shot. Aerials can be flawed because some players might be really strong in the air, which leads them to go up more, and others might prefer to let the opponent win the ball before taking it off them right away. Tackles are also flawed, and in my opinion, likely the defensive metric that’s the most flawed (if “flawness” is a scale…)

If a defender chose to tackle the opponent each time instead of trying to force them to move or pass backwards, they wouldn’t have a job because they would be too much of a liability. Unless they won 90%+ of their tackles, they would cause more harm than good.

Much of defending is forcing the opponent to do something they don’t want to do, or forcing them to do nothing instead of something. Or even making them do something worse than they normally do. This goes for both teams as a whole and players. The above examples are player-specific, but can be generalized to team-level defending.

It could be argued that the best defenders shouldn’t have to make many tackles. Paolo Maldini has famously said “if I have to make a tackle, then I’ve already made a mistake”. While there’s certainly nuance to that and tackles are a vital part of a defender’s game, there’s certainly logic behind it.

Positioning simply cannot be captured with event-based metrics, which are what are available in most datasets and websites tracking player statistics. But positioning is a key element of a defender’s performances. Positioning, in my opinion, is the common denominator of almost all defensive play.

Positioning

Players making many tackles, even successful ones, can often be forced into going to ground because of their positioning. Tackling is inherently risky and should be avoided by the best defenders, when possible, because if you don’t win the challenge, you’re either taken out of the play and have to recover or give away a foul (and potentially get a card which will impact how the defender can play the rest of the match).

Good positioning in defense will mean a defender doesn’t have to make as many tackles, and can force possession-losing passes, attack-halting back passes, poor shot decisions, and more. Tackling the opponent is of course still important in plenty of situations, but the metric itself, as well as the other main defensive metrics, are not aligned with what defending really is.

Positioning also impacts interceptions, although in inversely to tackles. Defenders with great positioning will likely have more interceptions than a defender with poor positioning, all else held constant, because they will be in a better location to cut out a pass. Of course, a defender’s ability to read the game plays a massive role here as well.

Reading of the Game

Along with positioning, a defender’s ability to read the game is vital to their performances. This goes for all footballers, but the nature of defending being off-ball means the ability to read a situation and understand how an attack might develop is key.

For example, being able to see what the opponents are doing and where they’re positioning their players in relation to a defender’s teammates and the ball is important in determining the best position for a defender to be in. In transition, a defender’s reading of the game (coupled with positioning) and understanding of the strengths and weaknesses of the opposing players could be the deciding actor between conceding a goal and earning a goal kick after a bad shot. If a right-footed striker was countering down the left with a supporting teammate on the right, the defender could position themselves in a way that shuts off the passing lane to the supporting teammate and also forces the striker to shoot with their weak foot. Virgil Van Dijk is often really effective in these situations. But widely-used metrics can’t capture this skill.

In fact, in the above example, a player going to ground to tackle the opponent might gift a goal. Even if they win the tackle, it’s still very risky. Of course, the best defenders know when to make this tackle and have great timing, but they also know when not to go to ground and know how the play will develop so that they can proactively defend against it.

How Might We Start Addressing This?

Single metrics for defenders are flawed, but there are ways we can use event data to start getting better pictures of defenders. We have to flip regular analysis on its head and look at the opponent instead of the defender themselves. In this thread below, I offer some thoughts into how we might be able to do this.

The basic theory behind this idea is in line with what I mentioned earlier: forcing players to do something that’s not what they wanted to do or what they’re good at doing. Using event data, which isn’t optimal anyway, we’d have to use opponents’ relative success rates or patterns as a proxy for defensive skills.

In that thread, we see that the focal player, Baktiyar Zaynutdinov, is involved in making CSKA’s left side less dangerous for the opponent than CSKA’s right side. This is not a perfect analysis or method, but is much better than looking at how many tackles, interceptions, and aerials Zaynutdinov has made this season.

This is just one example and uses aggregate data. A better method to use event data to analyze defensive skills would be on a much more granular level and you would need to tag every event an opponent does (or doesn’t do!) while being marked by the focal defender, or pressured, or tracked, etc. etc. You get the picture.

The important thing to note is that defender-focused events are often flawed, and while all events are flawed, expanded opponent-focused event data would be much better to analyze defenders with. However, this data isn’t available to the public which further exacerbates the misuse of defensive metrics. That’s not any party’s fault, but it’s where we’re at in public football analysis.

Final Thoughts

Defensive metrics are flawed. Each and every one of them. Many are dependent on the opponent doing something, and some may even be indicators of poor skills in other defensive areas. Popular metrics also can’t capture key elements of defending. Deterring an opponent from doing something they want or are trying to do is a central part of defending, and is ignored by all metrics including event data. Even allowing an opponent to do something like pass, cross, or shoot could be successful defending, if the defender forces the attacker to perform that action worse than they normally do.

There are ways to begin addressing these copious issues, but none are even that great with the data that’s currently available to the public.

Analyzing defenders with data will always be difficult, and might even involve much more advanced statistics than analyzing attacking skills. A metric I’ve developed in the past uses a method that we could use to start addressing the issues I’ve written about here, but would require data that’s not affordable or available to the public (in terms of getting up-to-date data during a season) as well as a decent level of the statistics behind the method. It’s just not as approachable as summing up or averaging out a specific number of events like shots, passes, crosses, etc.

So much of what makes a great defender great is currently unavailable in the data we have available. This is of course nobody’s fault, but a quirk of data being focused on the actions a player makes when on the ball, and defense being, by nature, off the ball.

But regardless of the root cause, we’re currently in a situation where widely-available data and metrics used to analyze defenders can often be antithetical to defending itself. There’s a disconnect between on-ball events and quality defensive skills in current data accessible to the public.

[…] while ago I explained some of my feelings towards defensive metrics. The widely available and used metrics are almost all bad for analyzing defensive performance. […]

LikeLike